When people figure out how to do something that previously only nature had done, this new technology can completely transform the possibility space for a sector while making old ways of doing things obsolete – and all in a surprisingly short period of time.

While the time can be very long between when an idea is first dreamt up and when its first practical demonstration appears, the time between the first practical demonstration and the idea becoming commonplace can be quite abrupt.

Flight – from mythology to reality

Cave paintings suggest that human beings have long imagined being like birds. A painting from the Stone Age discovered in Sulawesi, Indonesia, appears to depict the oldest-known mythological figures – including one that mixes human and bird-like features.

Artwork for many millennia has depicted winged lions, winged horses, winged dragons, winged humans, and winged gods. Yet for most of human history, the idea of human beings actually flying remained little more than a fantasy.

It was not until late 1903 that the Wright brothers flew in their powered, heavier-than-air craft for the first time. What for millennia had been a dream was now a reality. And within little over a decade, that reality was about to become commonplace.

In 1914, the world’s first scheduled passenger airline service took off, operating between St. Petersburg and Tampa, Florida (a distance of about 10 miles, or 15 kilometers). In 1919, the first regular international passenger air service started between London and Paris. In the span of just fifteen years, flying went from a fantastical dream to a regularly scheduled activity.

This feature is part of the ‘pattern of disruption’: an idea is formulated and remains science fiction for a very long time; but then science fiction turns into science fact quicker than anyone thought possible.

Today’s food disruption was anticipated over a century ago

Now we are in that same early ‘take off’ period for a number of industries related to food, energy and transportation, among others. Many of the underlying ideas, like growing meat or milk without the use of animals, are older than any person now alive.

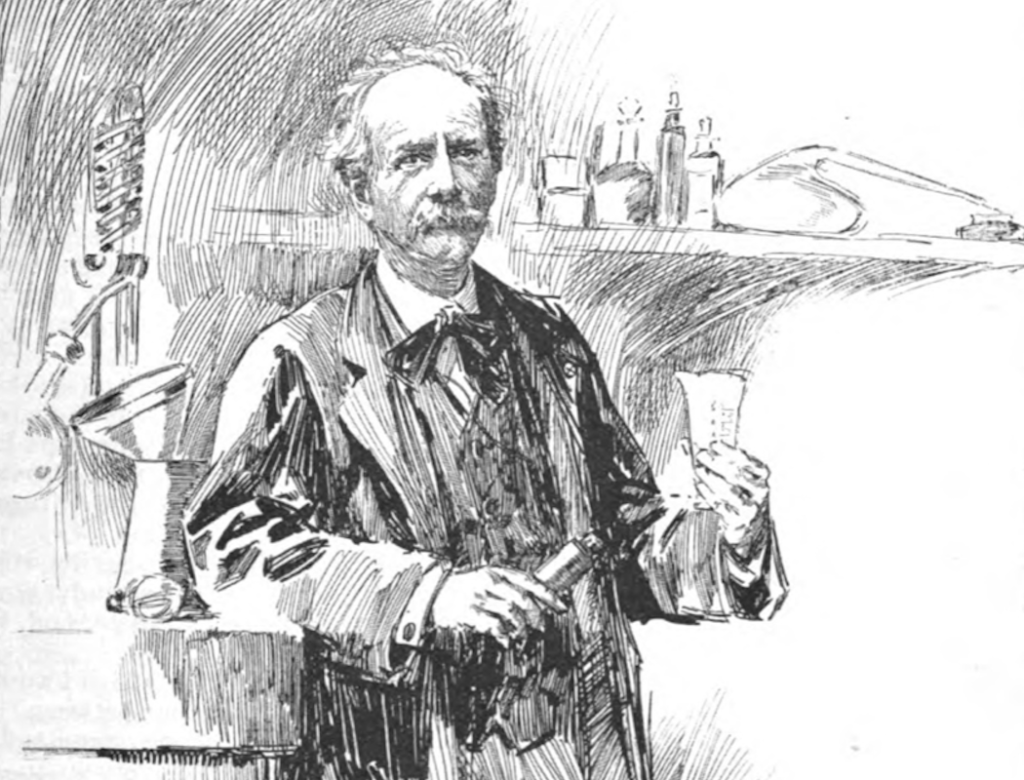

In his Paris office in September 1894, professor of chemistry Marcellin Berthelot gave a wide-ranging interview with McClure’s Magazine entitled ‘Foods in the Year 2000’. “The epicure of the future is to dine upon artificial meat,” he said. “Herds of cattle, flocks of sheep, and droves of swine will cease to be bred, because beef and mutton and pork will be manufactured direct from their elements.”

“There is no reason,” he continued, “why we should not before long make artificial milk… What is to prevent us, once we have gained the mastery, from making better milk, better meat, and better potatoes, at any season of the year, than those which nature gives us?”

Professor Marcellin Berthelot, as depicted in McClure’s Magazine, September 1894

At the time, Berthelot’s ideas were seen as fringe, if not absurd. Though it has taken more than a century, these ideas are just now starting to be realized. Precision fermentation and cellular agriculture start-up companies now sell cow’s milk proteins synthesized in Berthelot’s “glass cows” and his imagined “brass beefsteak-machines” now produce the first meats.

Air travel became a commercial industry within decades. Are we to believe it will take significantly longer to turn these fledgling food products into commercial industries?

In the course of the interview, the professor’s speculations flitted away from food and beverages to visit dyes and transportation, and also included what clean, abundant energy and new materials built up from their elements might mean for land use and human well-being.

Color revolutions and the transformation of textile industries

Today, we take for granted the vast availability and cheapness of colors in our clothing. If a group of fashion models was able to time-travel back just a few centuries, they might well be seen as strange, garish creatures due simply to the vast palette of colors they are wearing due to the limited availability and higher cost of dyes.

The earliest human settlements tended to use clothes in their natural colours – pale grey or white. As civilizations emerged, natural dyes were used, but often sparingly and specifically to distinguish gender and class hierarchies. When cheap, synthetic dyes burst on the scene, they transformed textile industries and cultural norms, with colors no longer retaining the same strict social significance they once had.

Berthelot had foreseen part of the dye disruption too: “The chemists have now succeeded in making pure indigo direct from its elements and it will soon be a commercial product. Then the indigo fields … will be abandoned, industrial laboratories having usurped their place.”

Speaking in 1894, the professor of chemistry certainly knew that Adolf von Baeyer had discovered a method to synthesize indigo dye chemically in 1878 (for which he won the Nobel Prize in Chemistry). By 1897, German company BASF found a viable route to produce the first synthetic indigo commercially. The product was initially met with scepticism from incumbents, part of the pattern we have noticed with new technologies. W.B. Hudson, President of the Bihar Indigo Planters Association, wrote a letter to the government of India in March 1900, saying “natural indigo dyes give a more durable colour than artificial dyes – the latter will not displace natural dyes in the long term”.

He was wrong. Artificial indigo did displace the natural dye, and quickly. By the time Mr. Hudson had written his letter, the disruption of natural indigo by synthetic indigo was already underway. In 1897, annually 19,000 tons of indigo were being produced from plant sources. By 1914, natural indigo production experienced a catastrophic collapse to 1,000 tons, a decline of almost 95% in a mere seventeen years.

“In India,” Virginia Postrel wrote in her 2020 book The Fabric of Civilization: How Textiles Made the World, “the transition was particularly abrupt. In its peak year, ending in March 1895, British India exported more than nine thousand tons of indigo dye. A decade later, that volume had plummeted by 74 percent while the revenue received dropped by 85 percent.”

Why do smart people in smart organizations fail to anticipate disruption?

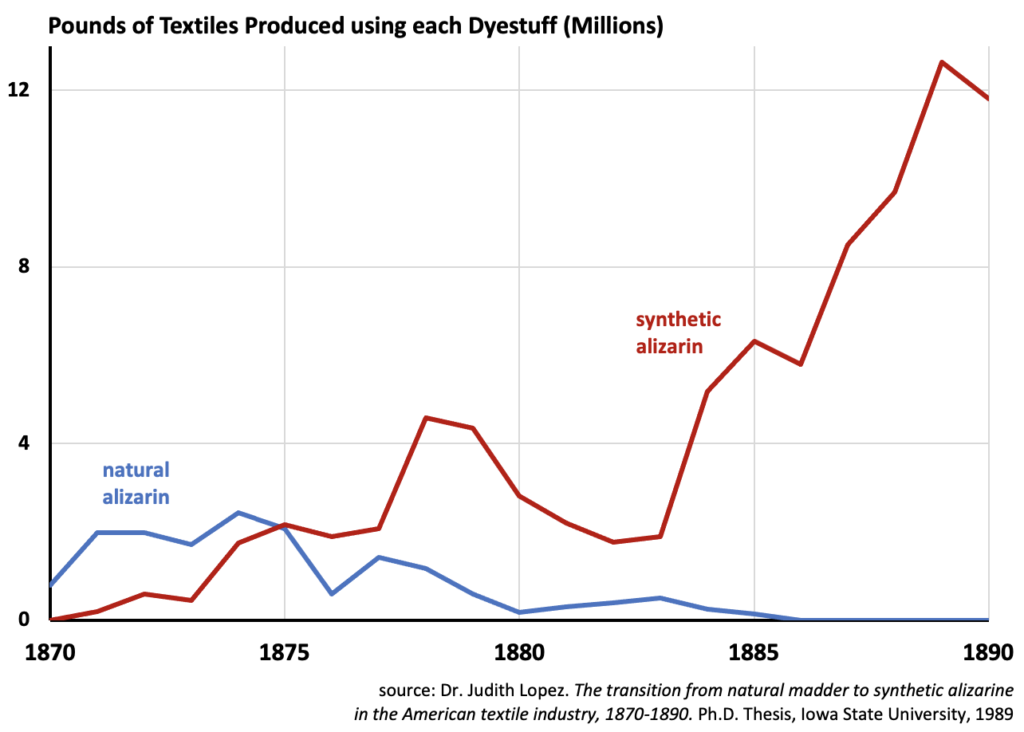

It is not as though the disruption of a dyestuff was unprecedented – the natural red dye alizarin was disrupted by synthetic red dyes about twenty years prior, again due principally to the work of German chemists, as we pointed out in ‘X Marks Disruption’ (page 33 in our report Rethinking Climate Change).

According to the doctoral thesis of Dr. Judith Lopez at Iowa State University, synthetic red dye alizarin quickly replaced natural sources (like the madder plant). Madder was “the world’s most widely and continuously used red dyestuff” for many centuries. Yet in about fifteen years from 1870 to 1885, the natural madder dye was completely wiped out in commercial applications.

This was not just a one-for-one replacement – the cheaper synthetic dye expanded the overall market. By the late 1880s, the amount of fabric dyed with synthetic red dye was more than five times the amount dyed with natural red dye fifteen years prior.

The main reason smart folks in smart organizations fail to anticipate disruption, then, is because they tend to be caught up in the assumptions and mindset of incumbent industries – many of which, like the production of dyes from natural sources, have been around for centuries, or even millennia. The belief in the invincibility of the status-quo dominates thinking. And the inability to understand the fundamentally different dynamics and possibilities of the new industries locks them into a downwards spiral of ‘more of the same’ – which only accelerates their decline.

Yet this same pattern of disruption that led to the prevalence of petroleum-produced dyes might disrupt it, too, thanks to innovations in precision biology which are experiencing exponential improvements in cost, efficiency and performance.

Huue, a California startup company founded by biologists Tammy Hsu and Michelle Zhu, is seeking to commercially produce indigo from genetically modified bacteria. “We are using a biological process to produce the indigo,” Zhu said in an interview with Fast Company magazine. “So, instead of needing the petroleum base for it, we can literally program bacteria, microbes to grow and secrete the indigo without the need for toxic chemicals.”

French biotech company Pili Bio and UK-based Colorifix are taking a similar approach. This is not science fiction – clothing dyed pink from genetically modified microbes is already available for sale. In an interview in another issue of Fast Company magazine, Colorifix says that “the conventional dye process takes between five and eight hours at high temperatures, and often requires as many as five washes; Colorifix’s process takes three hours and a single wash.”

Considering that about 20% of global water pollution stems from the textile industry, any improvement to how fabrics are dyed could have enormous benefits for the environment and human health.

The rubber revolution

The synthetic chemistry industry, using feedstock chemicals from coal tar or from petroleum, gave rise to the first artificial dyes and to the pharmaceutical industry. But it also led to a variety of other applications, including plastics, artificial fibers, and synthetic rubber. In his 1894 interview Professor Berthelot said, “long before the promised failure of the rubber trees to supply the demands of commerce, synthetic rubber will, in all probability, have filled the void.”

Like dyes, rubber is ubiquitous, being used in everything from tires, to automobile padding, from shoes to electrical insulation, from flooring to printing. But the entrance of the United States into World War II suddenly cut off America’s supplies of some key materials from Asia, including silk and rubber.

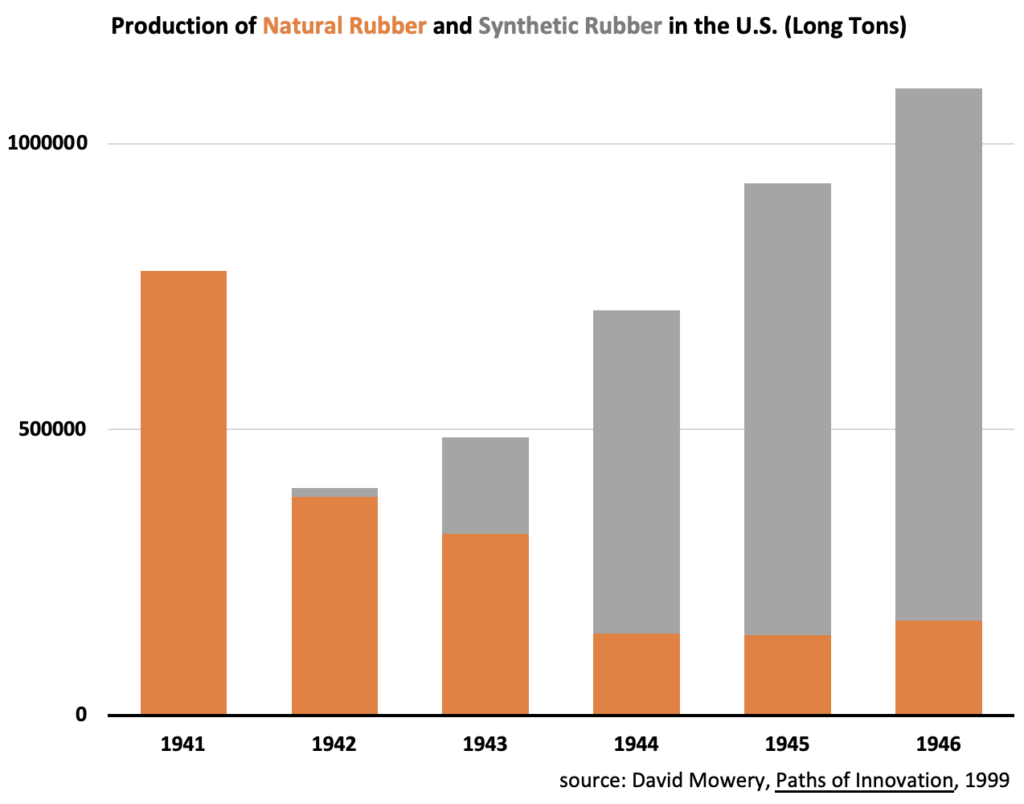

In Paths of Innovation: Technological Change in 20th-Century America, author David Mowery writes that “the federal government invested approximately $700 million in the construction of fifty-one plants that produced the essential monomer and polymer intermediates needed for the manufacture of synthetic rubber… The synthetic rubber program was second only to the Manhattan Project in terms of rapid and extensive mobilization of human resources in order to achieve an urgent wartime goal.”

The resulting transformation of the U.S. rubber industry during World War II was nothing short of breath-taking. “In 1940,” Mowery wrote, “natural rubber accounted for 99.6% of the U.S. rubber market and synthetic rubber a mere 0.4%.” But under the U.S. WWII synthetic rubber program, rubber production in the US went in 1941 from approximately 750,000 tons, almost all of which were from natural sources, to over one million tons five years later, more than 85% of which was synthetic. (According to Katrina Cornish of the USDA, global production of natural rubber bounced back after the war ended, temporarily overtaking synthetic production for fifteen years until 1960. But after then, synthetic rubber production consistently exceeded natural rubber production worldwide for the remainder of the century.)

As with many other disruptions, this was not a one-for-one replacement of natural rubber with synthetic alternatives, but instead resulted in a significant growth of the total market.

The process of making the precursor chemicals for synthetic rubber production itself underwent a radical change during the wartime build-up of this new industry – a sort of ‘disruption within a disruption’. According to Frank Howard’s 1947 book Buna Rubber: The Birth of an Industry, while in 1943 the production of the key precursor molecule for making synthetic rubber came largely from corn-fermented alcohol, by the end of 1944 the precursor was derived primarily from petroleum. Why was this switch so rapid? According to Paul Wendt in the January 1947 article, ‘The Control of Rubber in World War II’, the rubber precursor “produced in these [alcohol-based] plants cost roughly five times that produced in the petroleum plants.”

As we have seen repeatedly in our ‘pattern of disruption’, whenever a new technology performs well-enough to meet a given need while costing significantly less than the incumbent solution, the switch from the old technology to the new one is likely to be quick.

These different examples of the pattern of disruption highlight one of the biggest dangers when looking at today’s disruptions: the prevailing belief that everything is going to remain pretty much the same, because that’s how they’ve been for centuries. This couldn’t be further from the truth. Just because an industry has been dominant for decades, if not centuries or millennia, it doesn’t mean that it won’t be outcompeted, or in some cases even disappear, due to disruption. And what’s more, that process – once it starts – rarely happens slowly. In fact, it usually happens within one or two decades.

At the end of his interview, Berthelot speculated as to the consequences of what might happen when we make our meat, milk, dyes and rubber, rather than grow them:

“One can foresee the disappearance of the beasts from our fields, because horses no longer be used for traction or cattle for food. The countless acres now given over to growing grain and producing vines will be agricultural antiquities, which will have passed out of the memory of men… The air will be filled with aërial motors flying by forces borrowed from chemistry. Distances will diminish, and the distinction between fertile and non-fertile regions, from the causes named, will largely have passed away.”

“These are dreams, of course,” added the Professor, “but science may surely be permitted to dream sometimes.”

Today, the most consequential disruptions are unfolding in the energy, transportation and food sectors. Get ready for rapid, total transformation. The pattern of disruption has shown that even ancient dreams can quickly become reality.

===

This is Part 3 in our series ‘The Pattern of Disruption’. Part 1 is available here. Part 4, “The Disruption of Slavery Unveils Fastest Path to End Today’s Wars” is here.